Redesigning a robot programming interface to reduce errors.

*To respect NDA restrictions this case study has been anonymized

What were my roles?

Stakeholder management, UX Research, UX Design, UI Design, Usability Testing

Who were the users?

Automation Engineers (Senior & Junior) who use JSON code for up to 8 hours per run

What was the problem?

Slow and error-prone process for creating JSON "runs", leading to hours of debugging.

What was the solution?

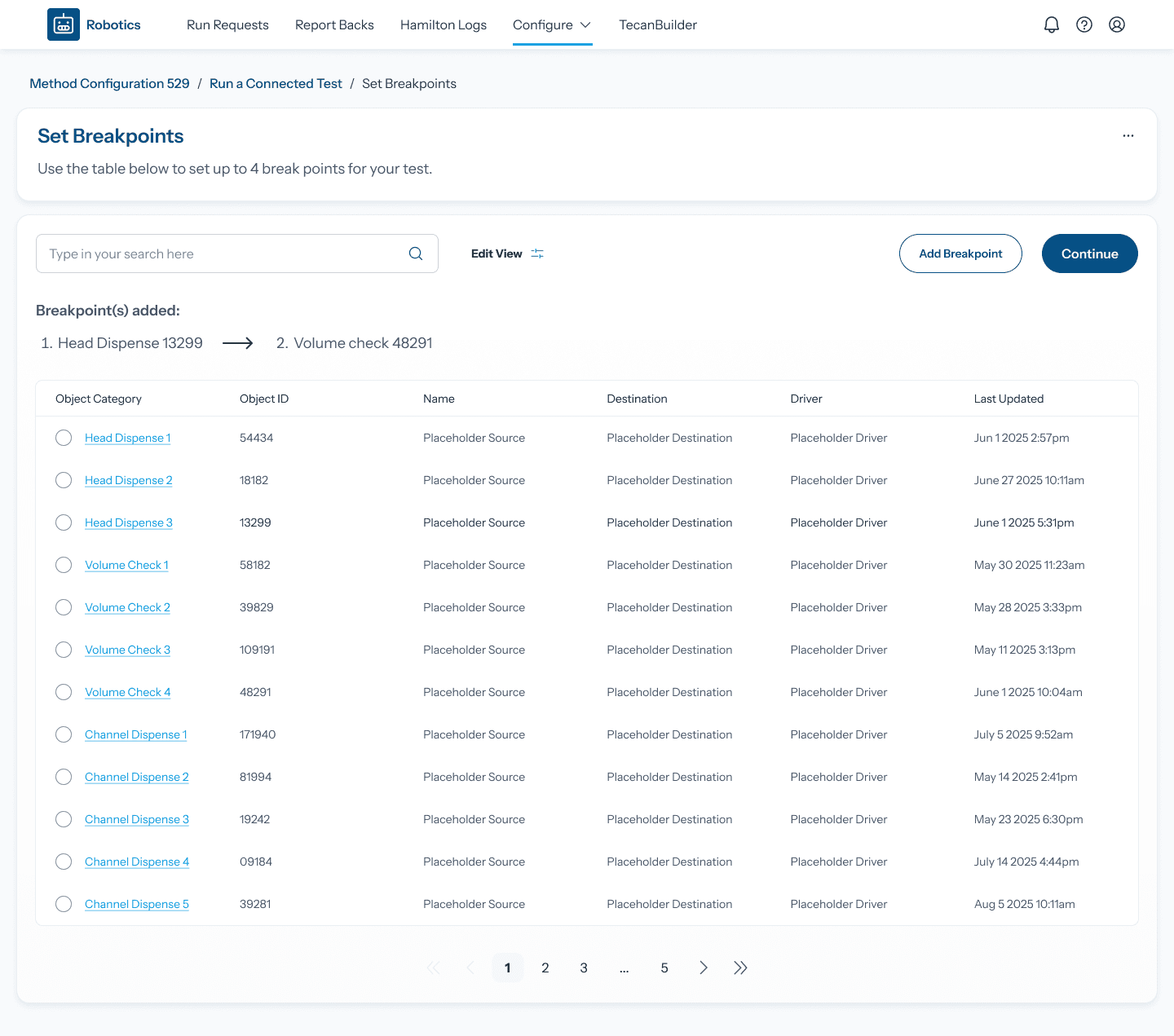

Redesigned UI for modular JSON creation, reducing manual coding and errors. A brand new testing environment for users to iterate on their code safely.

What was the business?

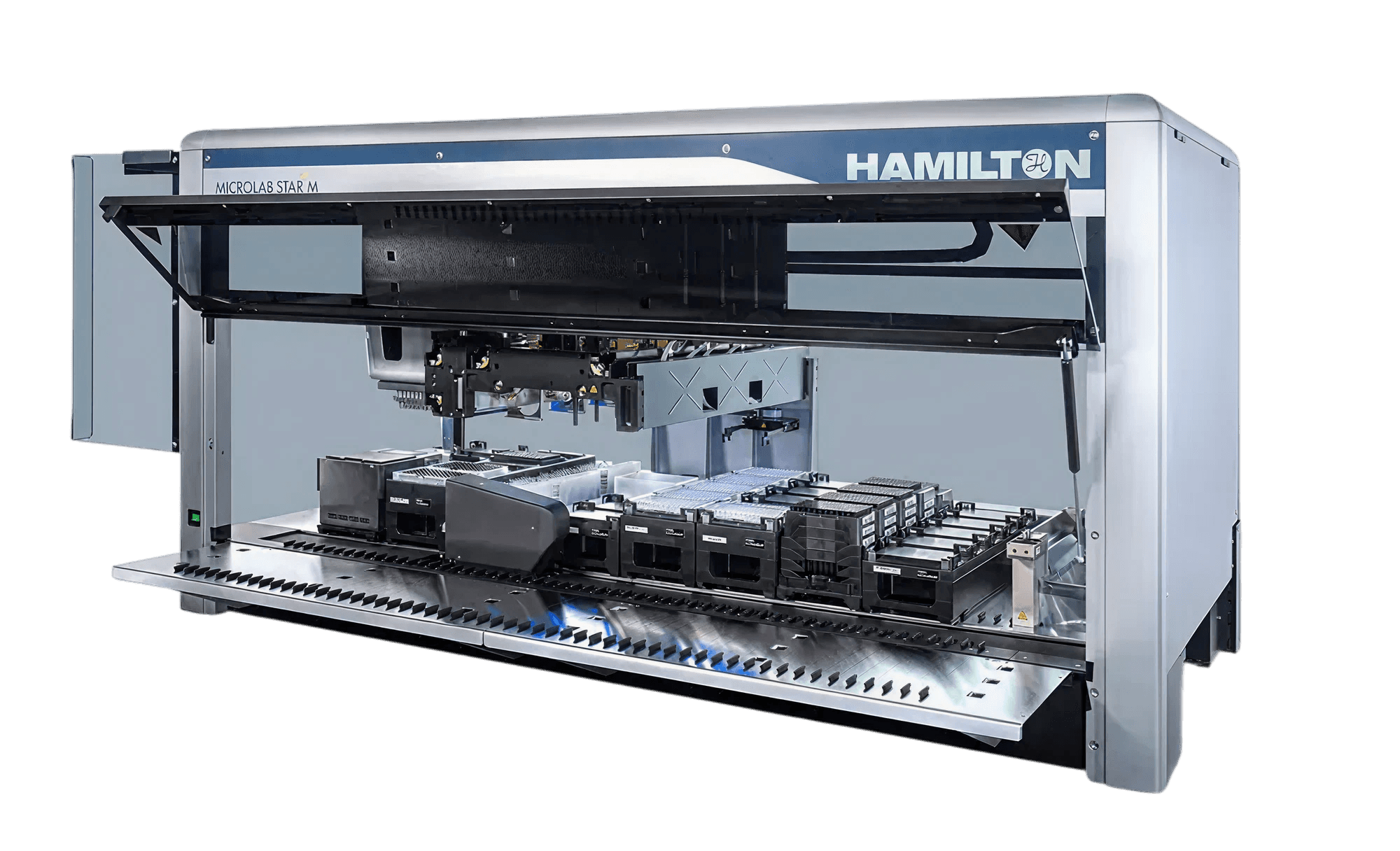

Multinational biotechnology company researching mRNA vaccines using liquid handling robots

What was unique?

Niche app servicing a small team that uses this tool every day. Users had a deep knowledge of robotics. Special requirements arose frequently.

Engineers were spending ~8 hours per run coding instructions in JSON. This was highly inefficient and prone to human error, creating a bottleneck in the research pipeline.

I conducted interview sessions with the engineering team to get an understanding of their workflows and identify issues early. Questions included:

How do they create and manage runs?

How do they collaborate or not with each other?

Where are their errors and inefficiencies in their experience?

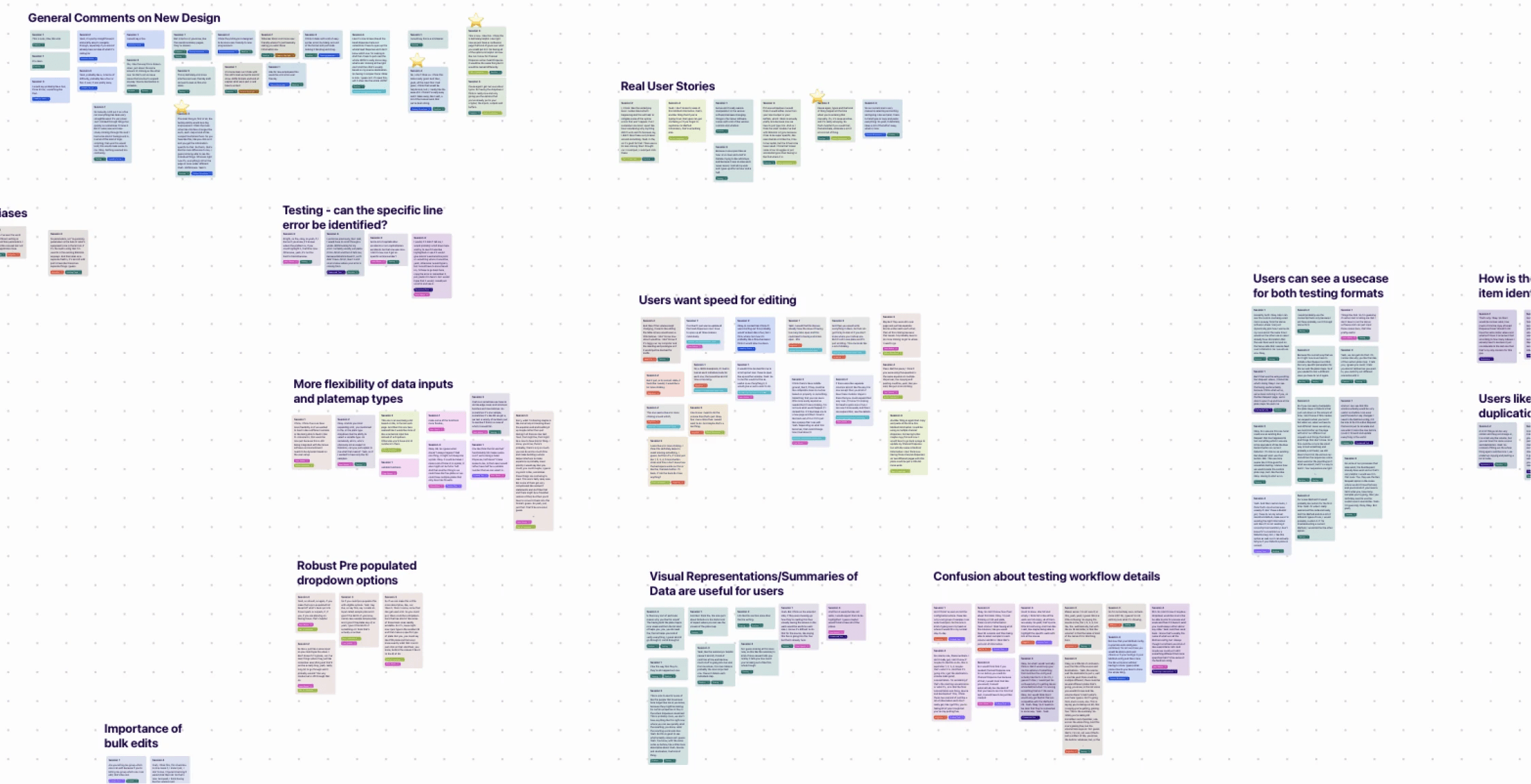

My user flow analysis in Miro

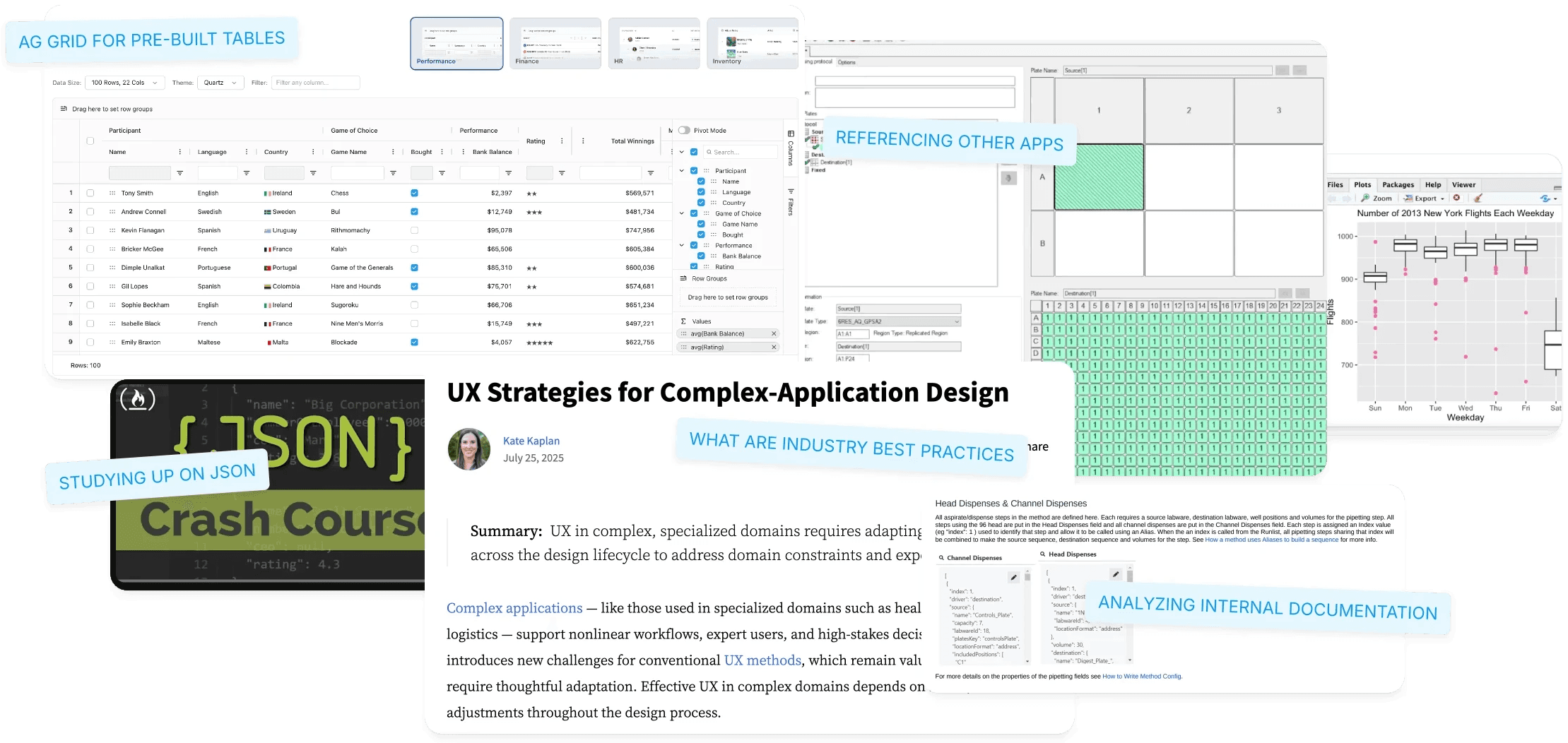

Time was spent immersing myself in the client's industry. Focus areas were complex table and application design, JSON fundamentals and competitors

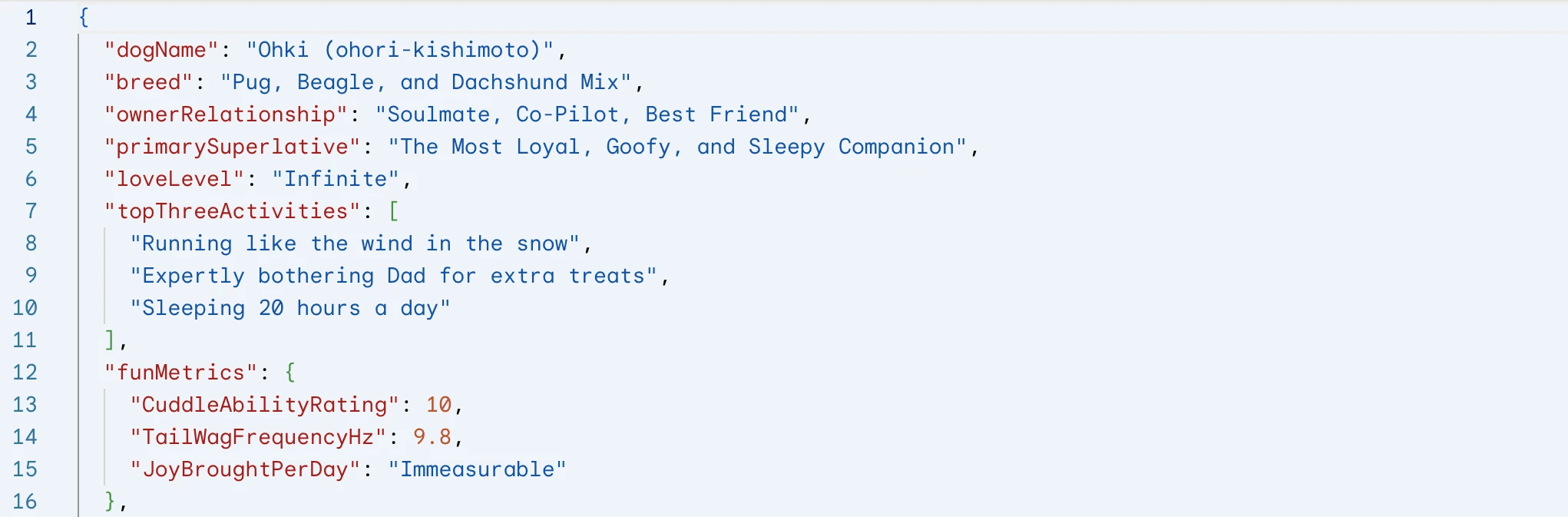

Learning the basics of JSON helped me understand our users better. It improved my communication and made it easier to work together with the engineers.

Director of Software Engineering

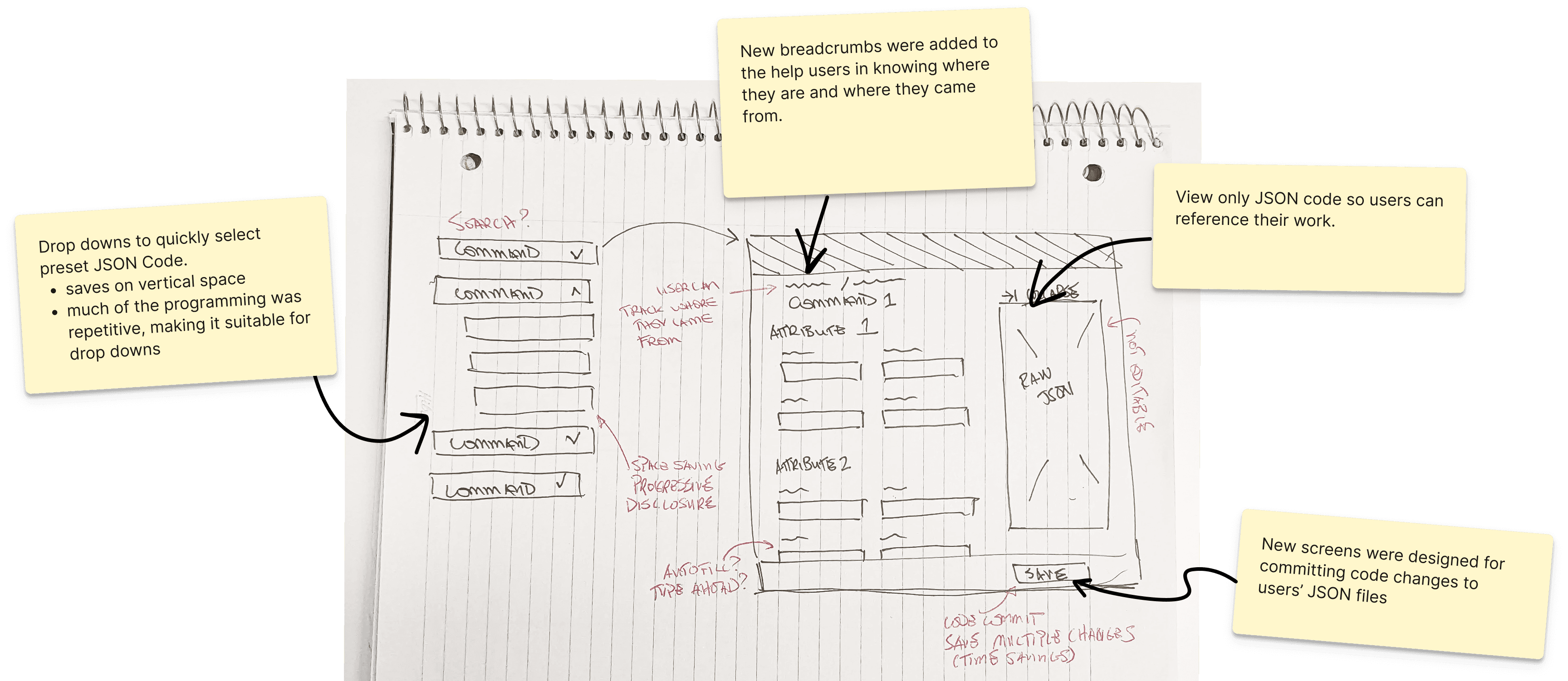

Move from free-form JSON entry to a structured, guided approach.

Implement native functionality to validate and iterate on code before deployment.

Improve the visual clarity and overall user experience of the screens.

✅ Outcome: This drastically reduces errors and accelerates the process for novice users.

Before - Main Runlist Page

Users were reliant on text fields for their JSON. Error validation was limited, overwhelm was high.

After - Main Runlist Page

Why start from scratch?

Users still can import code from external platforms. The system validates it based on organizational guidelines. 👇

I tested the designs in wireframe format with 5 of the 12 engineers on the team. The focus was on validation of our interface updates and identification of any improvements.

What was discovered?

Prioritization of speed

Expert users had concerns that the redesign would slow them down. We added the ability to import JSON and have the system verify it against internal standards.

More diversity of pre-set inputs:

These were taken into account and will be tracked and added to the roadmap

Ex. Alternate platemaps and more drop-down options

Testing Environment too conceptual

In response I designed a merge functionality for the test results. See below 👇

I designed a merge functionality for users, allowing them to integrate their test results directly into their existing runlists.

Positive Feedback from Testing

“I think it's really easy and it takes away a lot of the manual work that we've been doing.”

“Like for new employees this would be a lot more user friendly.”

“The main thing is the testing ability would be a big improvement.”

"the dropdown I think is really nice..only giving you the options that you've already put in"

✅ Successes

All three goals were satisfied: 1. Reduce Manual Coding, 2. Create a new testing area, 3. Modernize the visual organization of screens

They were provided with a complete set of dev ready UI designs to get the ball rolling.

🗺️ Opportunities

Improved, more targeted error highlighting with clearer guidance.

The ability to "templatize" entire runs (not just individual steps) to save even more time.

A robust alerts and commenting system to promote collaboration and version control between users.